The Advent of GenAI

To understand the drivers for AI Security we must understand the cataclysmic impact AI is having on the world of technology. Not since the dawn of the internet age has there been a technology revolution that has had such immediate uptake or shown such promise for the future.

It is no surprise that Alan Turing, one of the pioneers of computer science, thought deeply about the question, “Can machines think?” Turing belonged to an ad hoc group of researchers (the Ratio Club) that debated machine intelligence. He wrote a paper titled Intelligent Machinery in 1948. In it, he even refuted a claim that an intelligent machine had to be free of mistakes, something that has relevance in today’s objections that Large Language Models (LLMs) hallucinate and are thus not intelligent.

That paper was not formally published until 1968. In 1950, Turing published the first paper on the subject, Computing Machinery and Intelligence, in which he postulated the now famous “Turing Test” for determining if a machine exhibited intelligence. Could a machine win the “Imitation Game,” whereby a human judge could not determine which of two contestants was human and which was a machine?

Even at the dawn of the computer age, before transistors and silicon chips, humans were thinking about thinking machines, or artificial intelligence.

The 1956 Dartmouth Summer Research Project on Artificial Intelligence was a meeting of the minds of several dozen researchers from varied disciplines. Out of it came disciplines like symbolics and logic in computer science as well as examining the human brain as a model for neural nets.

AI took a backseat during the computer revolution in the ’90s when both super computers and personal computers were having their impact. IBM’s super computer, Deep Blue, took on Gary Kasparov – reigning World Chess Champion at the time – in an official match. It was 1996. Deep Blue was merely a powerful computer that had access to the historical record of games that had been played in the past. Kasparov beat the computer 4-2. But a rematch the next year went to Deep Blue 3 1/2 - 2 1/2.

While Deep Blue did not move the needle much, it demonstrated to the world that a previous bastion of human intellect, chess mastery, could succumb to machines. Chess and machines coalesced once more in 2010 with the founding of DeepMind, a UK-based AI startup founded by Demis Hassabis, a former game designer and chess prodigy. As a child, Hassabis bought his first computer with money earned from winning chess tournaments and taught himself how to code, paving the way to his start in both game design and AI development.

DeepMind, whose co-founders include Shane Legg and Mustafa Suleyman, was acquired by Google in 2014. Just two years later in 2016, the company’s AlphaGo machine defeated the world’s best player of the board game Go. This was considered a much bigger accomplishment than what IBM had achieved with Deep Blue, which relied on a brute-force approach to master chess. AlphaGo used a neural net approach that allowed it to ultimately conquer a much more complex game.

In 2017 the DeepMind team released a paper describing a new machine, AlphaZero, that was self-trained. Five thousand specialized machines (Tensor Processing Units, or TPUs) were used to generate games while an additional 64 more advanced TPUs were used to train the neural networks. When fired up from zero, AlphaZero plays millions of games against itself to learn. There is no pre-loading of historical games, no playbooks, just self-taught, or as it is called: reinforcement learning. That same year AlphaZero beat the best chess computer of the time, Stockfish 8, with 28 wins and 72 draws out of 100 games. Also in 2017, eight researchers at Google published one of the most cited papers of the 21st century, All You Need Is Attention. It described “Transformers” based on “attention” that became the basis for the creation of today’s Large Language Models.

OpenAI, of course, was founded in 2015 with the ambitious goal of creating artificial general intelligence (AGI). OpenAI released its first LLM, GPT-1, in 2018. It relied on attention-based Transformers and was trained on the Toronto Book Corpus of self-published works scraped from Smashwords —about 7,000 books and 985 million words. GPT-1 had 117 million parameters. GPT stands for Generative Pre-trained Transformer. Generative means that it generates text (and images and audio — and now video). It is trained on a large dataset before being fine-tuned. And Transformer because that was the trigger to building modern LLMs.

ChatGPT. A Shot Heard ‘Round the World.

On November 30, 2022, OpenAI released ChatGPT, a simple chat utility based on GPT-3.5. Everything changed.

Here is the full text of the announcement from OpenAI:

We’ve trained a model called ChatGPT, which interacts in a conversational way. The dialogue format makes it possible for ChatGPT to answer follow-up questions, admit its mistakes, challenge incorrect premises, and reject inappropriate requests. ChatGPT is a sibling model to InstructGPT, which is trained to follow an instruction in a prompt and provide a detailed response.

We are excited to introduce ChatGPT to get users’ feedback and learn about its strengths and weaknesses. During the research preview, usage of ChatGPT is free. Try it now at chatgpt.com.

The announcement came with cautions about correctness in its answers and how there were “…substantial reductions in harmful and untruthful outputs achieved by the use of reinforcement learning from human feedback (RLHF).”

Word spread on Twitter. On the first day there were around 153,000 visits to chat.openai.com.

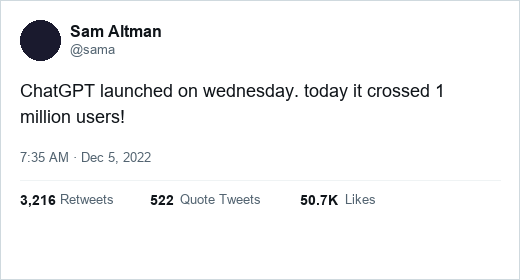

One week later, OpenAI CEO Sam Altman Tweeted:

By the end of two weeks, ChatGPT had 58 million visits. At the end of two months, it surpassed 100 million users — the fastest uptake of a consumer product in history. Even OpenAI was not prepared for the explosive response. It kicked off a race that, for the ensuing three years, has led to bigger and bigger models from OpenAI, Google, Anthropic, Grok, and others worldwide.

An entire industry was created in a shorter time than ever before in history. By comparison, it took approximately 70 years for the steam industry to achieve widespread use following the invention of the Newcomen steam engine in the 1700s.

It took 24 years to go from the transistor to the first computer on a chip (Intel 4004). AI went from invention of Transformers to ChatGPT in five years. The next three years after the launch of ChatGPT have been astounding.

The AI Boom

Nina Schick, an AI expert, advisor and public speaker, recently spoke about the explosive growth of AI in a LinkedIn post:

“Three years ago, a million tokens of AI inference cost $60. Today? Six cents. A 99.9% cost collapse. When something this powerful becomes this cheap, it doesn’t stay confined to research labs, it is able to flood the economy. AI is now diffusing faster than any technology in history — into every industry, every workflow, every device; 800 million people use ChatGPT weekly. And we’re still nowhere near the high watermark of what’s possible.”

How do you train a Large Language Model? With a lot of compute cycles. And the fastest computer chips for doing the math required? Graphical Processing Units (GPUs). If you get a chance to go back in time — who knows, maybe AI will make time travel possible — you should buy shares of Nvidia, which traded at just $16.91 per share on November 30, 2022. Its market cap was $422 billion at the time. It was already a fastgrowing company because its GPUs are also used to mine cryptocurrencies. Three years later, Nvidia is hovering between $180 and $200 per share, with a market cap well over $4 trillion, making it one of the most valuable companies in the world. Bigger than Microsoft, Apple, Google or Meta.

As we go to press, OpenAI is floating the idea of an IPO at a $1 trillion-dollar valuation and announced an additional $110 billion investment from Nvidia, Softbank, and Amazon. Investments in AI companies are dominating the venture capital world. From the beginning OpenAI has dominated news headlines. When it launched as a nonprofit research lab, it had $1 billion in pledged donations from Sam Altman, Elon Musk, Reid Hoffman, Peter Thiel, AWS, Infosys and others. In practice, only about $130M was actually contributed in the early years.

In 2019, Microsoft announced a multiyear, $1 billion partnership with OpenAI, agreeing that Azure will be OpenAI’s exclusive cloud provider for training and serving models. This was the first indication that hyperscalers were joining the AI race. It was a clarion call, especially to Google.

In January 2023, after the success of ChatGPT, Microsoft put in an additional $10 billion at a $29 billion valuation. By spring 2023, OpenAI’s reported value had soared to $86 billion. Roughly 18 months later, its value had nearly doubled to $157 billion with a new $6.6 billion investment. Thrive Capital led the round by contributing $1.3 billion; Nvidia, Microsoft and SoftBank were among the many participants.

An additional $40 billion was raised in March 2025, bringing OpenAI’s valuation to an astonishing $300 billion. The company is reportedly seeking additional capital in 2026 as it attempts to keep pace with the excessive costs associated with building and operating data centers.

According to CNBC, Amazon could invest as much as $50 billion in the ChatGPT maker. Meanwhile, Anthropic, a competing foundational model company, was valued at $183 billion after receiving $13 billion in funding in September 2025. For perspective, the two largest cybersecurity companies, CrowdStrike and Palo Alto Networks, are valued at $111 and $122 billion, respectively.

In February, 2026 Anthropic took in a Series G funding round led by Coatue and GIC, marking the second-largest private financing round in tech history and valuing the company at $380 billion.

On a smaller scale, Paris-based Mistral AI raised $113 million in seed capital at a $260 million valuation only weeks after founding. At the time, it was the largest seed round ever for a European startup. One year later Mistral raised approximately $640 million in a Series B led by General Catalyst and others, valuing the company at around $6 billion. Mistral continued to attract investors and secured an additional $1.5 billion in September 2025. Post-money, Mistral was valued at nearly $14 billion.

Mistral wasn’t the only AI startup to raise big money in June 2023. Inflection AI raised $1.3 billion at a reported $4 billion valuation. Inflection — led by CEO Mustafa Suleyman, who previously co-founded DeepMind — said it would use the funds to build a 22,000-GPU H100 (Nvidia) cluster. This makes it one of the first investments where the hardware footprint (world-scale GPU cluster) is explicitly the selling point. Additional AI success stories include Toronto-based Cohere, which raised a total of $1.5 billion at a $7 billion valuation to build its enterprise foundational models for regulated industries.

High valuations are often called out as demonstrations of market exuberance and hyper valuations a sure sign that the bubble is going to burst. Yet there are more signs that we are in the early stages of the positive economic impact of AI.

Actual Spending

In December 2024, OpenAI said its ARR was $5.5 billion — the equivalent of 23 million subscriptions at $20 per month. The company reported $10 billion ARR in June 2025. In the world of venture investing valuations for SaaS companies at more than 10x ARR, OpenAI is growing into its $300 billion valuation.

Not to be outdone, Anthropic’s annualized revenue increased from $1 billion to $3 billion during the first five months of 2025.

Gartner estimated that global GenAI expenditures reached $644 billion in 2024. If you include infrastructure investments (data centers, chips, etc.), total spending in 2025 may have reached $1.5 trillion.

These are real and impactful investments that are being made by the top technology companies in the world. While venture capital is often criticized for exaggerating business cycles by scrambling to pour money into the next big thing, it is time to realize that AI is indeed a big thing. Adapting to a new world enhanced by AI everywhere is going to drive technology, and thus the economy, to greater heights than most are planning for.

What About AI Security?

Vendors have touted AI in security for decades. But those early claims for AI were really machine learning and Bayesian filters, which are just a probabilistic prediction based on past information.

You might recall that Mike Lynch, founder of Darktrace, was an early user of the term “AI” for the company’s security solutions and owned a yacht called Bayesian. Tragically, Mike Lynch and several others perished when the yacht sank during a freak squall off the cost of Sicily. Darktrace was taken private by PE firm Thoma Bravo in 2024. Others, most notably Cylance, applied machine learning to their approach to stopping malware. As Stuart McClure relates next: “The idea was to stop looking for the fingerprints of known criminals and instead teach a machine to spot criminal intent just by looking at an object before it ran or was opened into memory.” That was 2012.

According to McClure:

“We embarked on a massive undertaking. We gathered hundreds of millions of files — both good and bad — from every corner of the internet. We fed these files into a machine learning model and trained it to discern the “DNA” of a malicious file. We weren’t looking for signatures, which are brittle and easy to change. We were looking for features, the thousands of subtle attributes and characteristics that, when analyzed together, could predict with mathematical certainty whether a file was a threat. Does it try to access the network? Does it have packed or obfuscated code? Does it call certain APIs in a specific order?

By analyzing these features in concert, our AI could make a predictive judgment on any file, even one it had never seen before.”

Of course, 2012 was eight years before the breakthrough of Transformers and the eventual creation of the Large Language Models we use today. McClure sold Cylance to Blackberry in 2018 for about $1.4 billion. Blackberry sold the Cylance technology to Arctic Wolf, a large MSSP, for $160 million plus stock in December 2024.

Over 60 of the AI Security vendors listed in the appendix were founded in 2022. They either had early access to OpenAI’s playground and had the vision to see what would be possible or they were well positioned to pivot to a model that either protected AI usage or leveraged AI to enhance a security capability.

Protect AI was early. Founder Ian Swanson, who had an extensive background in machine learning and AI at AWS, saw what was happening with early OpenAI models and realized that they would need to be protected from attacks. Protect AI nabbed $13.5 million in seed funding in December 2022, two weeks after the launch of ChatGPT. The round was led by Acrew Capital and boldstart ventures; additional participants included Knollwood Capital, Pelion Ventures, and Avisio Ventures. Three cybersecurity leaders also participated: Shlomo Kramer, Nir Polak, and Dimitri Sirota.

Palo Alto Networks acquired Protect AI for a reported $650 in the summer of 2025. By that time, Protect AI had taken in a total of $108.5 million in venture backing. Doppel is another AI Security vendor that was started in 2022. The company started as an AI tool for detecting scams in crypto but evolved to cover all digital attack surfaces, including social media, domains, and telco. Doppel took in $70 million in a Series C round in November 2025 at a valuation of more than $600 million. The company has raised $124 million to date. Doppel’s headcount increased 75% in 2025 to 292 people.

Clearly, 2022 was a big year for AI Security vendors — and 2023 was even bigger, with 96 AI Security companies founded. They include but are certainly not limited to: Clover Security, which uses AI to assist in the design phase of applications, grew 144% to 66 employees in 2025.

Adaptive Security uses GenAI to train employees with custom-generated phishing and impersonation attacks. It received the first money OpenAI has put into a cybersecurity company in April 2025. We recorded an additional 87 AI Security companies that were founded in 2024, including 7AI, Airrived, XBOW, Aurascape, and Prophet.

Thus far, we have only recorded 42 AI Security startups in 2025 — not because there was a slowdown in company creation but because there is a lag between founding and becoming discoverable. Airrived, for instance, founded in 2024 but did not come out of stealth until January 2026.

In other words, there may well be 400+ AI Security companies.

In the following chapters we introduce you to the 378 AI Security vendors we track today in the IT-Harvest Dashboard, a platform that has complete descriptions of 4,000+ vendors and their 11,400+ products.

STORY

Stuart McClure

Stuart McClure’s life’s work has been forged by a single, unwavering mission of prevention, a principle seared into his soul at age 19 as a survivor of the catastrophic United Flight 811 disaster. That moment, where he struggled to process the preventable system failure of a “faulty latch” even as he faced sure death, became the through-line for his entire career. It drove him to co-found Foundstone and write Hacking Exposed, shifting the security paradigm from reaction to proactive defense by teaching the world to think like an attacker. Later, recognizing the inherent flaws in reactive antivirus, he founded Cylance, pioneering the use of AI to predict and prevent malware before it could ever execute. Now, his preventative focus extends to the most complex system of all, the human mind and collaboration with WethosAI, continuing a lifelong quest to find and fix the faulty latches in our world, whether they are in our technology or in ourselves.

Part 0: To Understand the Latch…

People often ask: what drives you? They see the companies, the books, the talks, and they try to connect the dots from a LinkedIn profile. They see a career. But I don’t see a career. I see a single, unbroken thread that runs from a childhood spent on the move for survival to a moment of sheer terror suspended 22,000 feet above the Pacific Ocean. Everything I am, everything I’ve built and pursued, it all started there.

The truth is, all the dots connect back to a single, faulty latch. But to understand the latch, you have to understand the kid who was conditioned to see it in the first place.

Part I: The observer

My story doesn’t have a hometown; it has a series of backdrops, each one a lesson in adaptation. I was born in the sprawling, smogged out, rotted, sun-baked suburbs of Los Angeles, but my memory begins in a place that couldn’t be more different: Guam. We moved there when I was two years old. My new world wasn’t asphalt and freeways; it was the crushing humidity that hit you like a wet towel the moment you stepped outside, the constant, rhythmic crash of waves on the reef, and the sweet, heavy scent of plumeria flowers (my favorite flower) mixed with salt and damp earth.

Being a “haole” kid (a white outsider) in a ubiquitous Chamorro and Filipino culture was my first, and most profound, education. I was different, very different. My skin, my hair and eye color, even the food my mom packed for my lunch. When you’re the new kid, over and over, you learn to do one thing really well: you watch. You become a master observer, not by choice, but by necessity. I’d stand at the edge of the schoolyard, watching the other kids. I didn’t just see them playing; I saw the invisible architecture of their world. I saw the unspoken rules of their games, the subtle hierarchies determined by who could climb the highest in the banyan tree, the way one person’s actions rippled through the group. I learned to deconstruct social systems on the fly just to figure out where I fit, or how to avoid trouble. I remember one time, watching a group of kids play a game with marbles. I had no idea what the rules were. But I watched for an hour. I saw how they drew the circle in the dirt, how they held the shooter marble, the specific flick of the thumb that sent it spinning with deadly accuracy. I saw which marbles were valuable, which shots were risky, and which player was the unspoken leader. The next day, I walked up, and without saying a word, I played. And I fit in. That constant adaptation, that need to understand the underlying mechanics of a new place before engaging, became a part of my DNA. It was pattern recognition, born from social survival.

After almost eight years on Guam, I moved again, this time to Hawaii for a year, then to Colorado Springs for my final years of high school. Each move was another system to deconstruct, another set of rules to learn. By the time I got to the University of Colorado Boulder, I was hardwired to look beneath the surface. I couldn’t just pick a standard major; it felt too superficial. I was obsessed with the why behind everything. Why do people make the choices they do? Why do they build things in a certain way, and why do those things so often break? So I cobbled together my own curriculum, a strange brew of psychology, philosophy, and computer science. It seemed like the only way to get at the root of it all. Psychology gave me insight into the flawed, brilliant, unpredictable human mind including its biases, its motivations, its capacity for both genius and catastrophic error. Philosophy gave me the frameworks to think about logic, ethics, intent and the meaning of life. And computer science gave me the keys to a new kingdom: a world built on pure, cold, unforgiving logic. I was fascinated by the intersection of these worlds. The space where human intent, with all its messiness, met the rigid structure of a machine. I didn’t know it then, but I was building the perfect toolkit to one day get inside the mind of a hacker — to understand not just what they did, but why they did it.

Part II: The fall

February 24, 1989. I was 19 years old, a week shy of my 20th birthday. I was on United Flight 811, flying from Honolulu to New Zealand to accompany my mother, who invited me to travel to Australia to visit my stepfather, who was visiting Sydney for work. I was a college kid on an adventure, so despite my misgivings, I jumped at the chance. Through some stroke of luck, we managed to get an upgrade to the business class section of the 747. For my mother and younger brother sitting in Row 44 at the time, it felt like we had won the lottery. But in the moments after the offer to upgrade, I had a bad feeling about what it meant, and decided to suggest to my mother that we should stay in our current seats, as we were comfortable having the entire middle five seats for us. So we passed. The takeoff was smooth. We climbed through the clouds. The flight attendants started their service. Given it was 2:00 a.m. local time (5:00 a.m. LA time) I was tired and fell asleep quickly. Then, an explosive sound produced the most violent chaos one could ever experience. An explosive decompression. The forward cargo door had ripped off and nine human beings buckled comfortably in their seats were torn from their bolts and sucked out into the blackness of the night sky. One moment they were there, people with lives and families and plans, and the next, just a gaping hole and the deafening, soul-stealing roar of the wind. It wasn’t like in the movies. There’s no dramatic music, no slow motion. It was a physical assault. The air became a solid force, a hurricane tearing through the cabin, ripping at your clothes, your skin, sucking the breath from your lungs. Debris became shrapnel. The oxygen masks dropped in some sections but not others and panic ensued. The primal scream of the passengers and the wind was overwhelming. It was a cacophony of sound that erased all other thoughts.

For twenty minutes, as the pilots wrestled with a crippled giant, trying to get us back to Honolulu, I was absolutely, fundamentally certain that I was going to die. In fact, I believe that my mind actually died in that moment, or at least what I thought I had as a mind then. Time warped. Seconds felt like hours. I remember looking at the horrified passengers next to me and the flight attendants racing through the cabin trying to prepare us all for a crash landing and thinking, “So this is it. This is how it ends.” A latch failed. A door blew off. This didn’t have to happen.

When we finally, miraculously, landed back in Honolulu, the silence was as shocking as the noise had been. The wind was gone. All you could hear was the sobbing of the survivors and the whine of the emergency vehicles racing toward us. Walking off that plane, past the twisted metal and the gaping hole, was like being born again, but into a different world. A world where I understood, on a cellular level, the fragility of the systems we rely on every single day.

After years of investigation, we learned that the event wasn’t a random act of God. This wasn’t bad luck. This was a simple multi-system failure. A chain reaction of design flaws, preventable mistakes. An ignored warning. A faulty backup latching system. Surviving that day didn’t just give me a second chance. It burned a mission into my soul. It wasn’t a choice anymore. It wasn’t a career path. It was a moral imperative. My purpose was to find the faulty latches of the world, the weak points, the hidden flaws, the ignored warnings, and fix them before they could tear another hole in someone’s life.

Part III: The Playbook

After Flight 811, I couldn’t see the world the same way. Every system, every process, every piece of technology had a potential point of failure. When I started my career at Ernst & Young, tasked with building their first cybersecurity practice, I saw the same pattern everywhere, this time in the digital world. It was the early ’90s, the dawn of the commercial internet, and companies were rushing online with a kind of gold-rush naivete. Their approach to security was terrifyingly familiar. They were waiting for the breach to happen, for the “cargo door” to blow off their network, before they even thought about security. They saw it as an expense, a nuisance. Security was the cleanup crew you called after your customer data was splattered all over the internet. This reactive posture felt viscerally wrong to me. It was the same flawed thinking that had put me in the sky that night. I knew I had to do something different. I couldn’t just be part of the cleanup crew. In 1999, I left the comfort of a big firm and, with my co-founders, took the entrepreneurial leap. We started Foundstone. Our mission was simple but revolutionary for its time: to stop a hacker, you have to become one. We would be the “ethical hackers,” the ones who would show you exactly how the door would fail before the real storm hit. We pioneered the field of penetration testing, not as a theoretical exercise, but as a full-contact sport. We’d break into a company’s network — with their permission, of course — and then walk into their boardroom and show them, step by step, how we did it.

The reactions were always the same. First, disbelief. Then, anger. Finally, a dawning understanding. We weren’t just showing them vulnerabilities; we were changing their entire mindset.

But showing a few dozen companies wasn’t enough. The problem was systemic. We had to get this knowledge out there, to democratize it, to arm the people on the front lines. That’s why I wrote Hacking Exposed. I made a conscious decision to do something that was considered almost heretical and decided to publish the attacker’s playbook. People thought we were crazy. The publisher was nervous. Other security professionals accused us of being irresponsible, of writing a manual for the bad guys. They didn’t get it. The bad guys already knew this stuff; they were inventing it. It was the defenders, the sysadmins in the server rooms, the everyday IT folks, who were in the dark. They were fighting a war without knowing the enemy’s tactics. We weren’t giving away secrets; we were arming our own side. The book was a sensation because it was real. It was a direct manifestation of my core belief: to stop a threat, you must first understand it completely. Every copy we sold felt like installing a stronger latch somewhere in the world. When McAfee acquired Foundstone in 2004, I transitioned from scrappy startup founder to the Worldwide Chief Technology Officer of a global security giant. From that perch, I had a panoramic view of the entire threat landscape. I could see the data streams from millions of endpoints around the world. I could see the cyber arms race in real time. And what I saw scared me more than anything since Flight 811.

The reactive model we were all using, the signature-based antivirus model that McAfee itself championed, was fundamentally, mathematically, doomed to fail. The volume of new malware was exploding, growing at an exponential rate. For every new threat, a security company had to capture it, analyze it, create a signature, and push that update out to millions of machines. But the attackers could generate new, unknown malware, “zero-day” threats, infinitely faster.

I was sitting in meetings where we were pouring hundreds of millions of dollars into a system that was, by its very design, always one step behind. We were building a bigger, more expensive version of the same reactive mousetrap, while the attackers were breeding new mice faster than we could ever hope to catch them. I saw another catastrophic system failure on the horizon. The industry’s fundamental approach to security was the new faulty cargo door latch. It was only a matter of time before it blew wide open.

Part IV: The Prediction

The weight of that realization was immense. I felt like a fraud, sitting at the top of a security company, knowing that our core strategy was a losing game. I couldn’t shake the feeling that we were all complicit in a failing system, selling a false sense of security. I tried to change things from the inside, but the corporate immune system is a powerful thing. The company was a massive ship, and turning it was like trying to turn a glacier. I knew I had to do something, and it had to be completely different. I had to jump off the ship and build a new one.

I left McAfee with a singular, audacious goal that most people in the industry considered insane: I was going to solve the problem of malware. Not chase it, not clean it up after the fact. Solve it. Prevent it from ever running.

In 2012, I founded Cylance. The name itself was a portmanteau of “cyberspace” and “silence,” representing the goal of silencing the noise of cyberattacks. The vision was to move beyond reaction entirely. I didn’t want to detect attacks faster; I wanted to prevent them from ever executing in the first place. The key, I believed, was artificial intelligence. At the time, using AI and machine learning for cybersecurity was a fringe concept, often dismissed as marketing fluff or pure science fiction. But my team and I were convinced it was the only path forward. The idea was to stop looking for the fingerprints of known criminals and instead teach a machine to spot criminal intent just by looking at an object before it ran or was opened into memory.

We embarked on a massive undertaking. We gathered hundreds of millions of files — both good and bad — from every corner of the internet. We fed these files into a machine learning model and trained it to discern the “DNA” of a malicious file. We weren’t looking for signatures, which are brittle and easy to change. We were looking for features, the thousands of subtle attributes and characteristics that, when analyzed together, could predict with mathematical certainty whether a file was a threat. Does it try to access the network? Does it have packed or obfuscated code? Does it call certain APIs in a specific order?

By analyzing these features in concert, our AI could make a predictive judgment on any file, even one it had never seen before. It was the ultimate expression of my preventative philosophy.

Cylance’s AI didn’t need cloud lookups, daily updates, or prior knowledge of an attack. It made its decision pre-execution, in milliseconds, right on the endpoint. It was the digital equivalent of an engineer inspecting the cargo door latch and knowing, just by its metallurgical properties and design, that it was going to fail under pressure, without ever having to see it fail first. Bringing Cylance to market was a brutal, uphill battle. We were challenging the multibillion-dollar antivirus industry and its decades-long dogma. We were heretics. In meeting after meeting, we were telling customers that the security blankets they had been sold for years were full of holes. We had to prove it, over and over. We did live demonstrations, what we called “The Unbelievable Tour,” pitting our AI against the latest zero-day threats in real-time. We’d download hundreds of brand-new pieces of malware that had just appeared online, drop it on a machine protected by our competitors, and watch it detonate. Then we’d do the same on a machine protected by Cylance, and… nothing. The file would be quarantined before it could even twitch.

Slowly but surely, the tide began to turn. The company’s success was meteoric because the proof was undeniable. We were redefining endpoint security, proving that a preventative approach was not only possible but vastly superior. The journey culminated in 2019 when BlackBerry acquired Cylance for $1.5 billion. As President of BlackBerry Cylance, I worked to integrate this forward-thinking technology into a broader security ecosystem, bringing predictive security to a global scale.

Cylance was more than a company to me. It was the logical and emotional successor to the mission that started in the wreckage of Flight 811. It was proof that with the right approach and relentless innovation, you could build a system that prevents disaster by design.

Part V: The Human System

But as they say, an innovator’s work is never truly done. After Cylance, my focus didn’t change, but instead it expanded. The core question that has driven my entire career — “How do we stop the problem before it starts?” — remained my guiding star. So I founded a new AI incubator, NumberOne AI, to tackle the next generation of challenges. This led to the creation of companies like WethosAI, and transforming the Appsec company Qwiet AI. With Qwiet AI, we applied the prevention principle to the very beginning of the technology lifecycle: the software development process. Instead of waiting for vulnerabilities to be discovered in finished products — another form of reactive security — we used AI to find and fix security flaws in the code as it’s being written. It’s about shifting security “left,” making it an integral part of creation, not a final inspection. We are, in essence, helping developers build better, stronger cargo door latches from the very start, embedding security into the blueprint.

But my journey has also led me to a more profound, and perhaps more difficult, realization. The most complex, volatile, and critical system of all is not made of code or silicon. It’s us. It’s the human system. I’ve spent a lifetime analyzing how technical systems fail. But as I built and led multiple companies, I saw that the biggest vulnerabilities in any organization are often not in its firewalls, but in its communication, its collaboration, and its team dynamics. Misunderstandings, cognitive biases, conflicting work styles—these are the “zero-day threats” of human interaction. They can derail projects, destroy morale, and stifle innovation just as effectively as any piece of malware. A brilliant team can be brought to its knees not by a competitor, but by internal friction. It’s another kind of preventable disaster. This insight is the driving force behind my latest venture, WethosAI. Here, the prevention mission turns inward. We are building an interpersonal productivity platform that uses AI to help individuals and teams understand their innate work styles and cognitive patterns. The goal is to proactively identify and address the friction points that lead to conflict and inefficiency. By making people aware of their own “human code” — how they’re wired to solve problems, communicate, and handle stress — and that of their colleagues, we can prevent misunderstandings before they arise. We can foster more effective collaboration and unlock a higher level of collective intelligence. It’s about preventing the “human error” that is so often the root cause of systemic failure.

When I look back at the arc of my life — from the nomadic kid learning to deconstruct new social systems, to the university student blending psychology with computer science, to the survivor of a catastrophic system failure — I see a single, unbroken thread. My life’s work has been a relentless, sometimes obsessive, quest for prevention.

The terror of Flight 811 was not its end, but its beginning. It taught me that the most devastating failures are not random acts of fate. They are the predictable outcomes of vulnerabilities we have the power to find and fix. My leadership and entrepreneurial spirit have always been a direct reflection of this belief. I don’t build companies just to create technology or generate revenue; I build them to solve problems at their source. From authoring Hacking Exposed to arm defenders with knowledge, to building Cylance to predict and prevent attacks, to now developing WethosAI to harmonize human collaboration, my mission has evolved but its core has remained the same. It is a mission to move the world from a state of perpetual cleanup to one of intelligent foresight. It is a powerful reminder that sometimes, the most profound purpose can be found in the unwavering commitment to honoring the promise you made to yourself in the terrifying quiet after the wind stopped roaring.

It’s about making sure that what went wrong once, never, ever has to go wrong again. And that is a mission I will continue for the rest of my life.